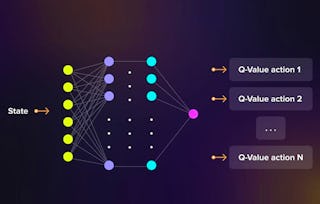

In this course, you will learn about several algorithms that can learn near optimal policies based on trial and error interaction with the environment---learning from the agent’s own experience. Learning from actual experience is striking because it requires no prior knowledge of the environment’s dynamics, yet can still attain optimal behavior. We will cover intuitively simple but powerful Monte Carlo methods, and temporal difference learning methods including Q-learning. We will wrap up this course investigating how we can get the best of both worlds: algorithms that can combine model-based planning (similar to dynamic programming) and temporal difference updates to radically accelerate learning.

Sample-based Learning Methods

Sample-based Learning Methods

This course is part of Reinforcement Learning Specialization

Instructors: Martha White

38,292 already enrolled

Included with

1,256 reviews

Recommended experience

Details to know

Add to your LinkedIn profile

5 assignments

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 5 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructors

Explore more from Machine Learning

Status: Preview

Status: PreviewColumbia University

Status: Preview

Status: PreviewNortheastern University

Status: Preview

Status: PreviewNortheastern University

Status: Preview

Status: PreviewSimplilearn

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

82.33%

- 4 stars

13.20%

- 3 stars

2.78%

- 2 stars

0.63%

- 1 star

1.03%

Showing 3 of 1256

Reviewed on Feb 27, 2020

Itwasgoodinsubstane but there is plenty of issues with the automated grader. you spend most time dealing with the letter not on actual learning of the matter.

Reviewed on Jul 15, 2023

It was a good course, but I was expecting more explanation on the subjects in the book. For example Prioritized Sweeping was missing and the videos are not instructive enough.

Reviewed on Mar 13, 2022

The videos are very clear and do a good job explaining the material from the textbook. The assignments are relevant and just right in terms of length and difficulty.

Open new doors with Coursera Plus

Unlimited access to 10,000+ world-class courses, hands-on projects, and job-ready certificate programs - all included in your subscription

Advance your career with an online degree

Earn a degree from world-class universities - 100% online

Join over 3,400 global companies that choose Coursera for Business

Upskill your employees to excel in the digital economy