Dans ce cours, vous apprendrez plusieurs algorithmes qui peuvent apprendre des politiques presque optimales basées sur l'interaction d'essais et d'erreurs avec l'environnement - l'apprentissage à partir de l'expérience de l'agent. L'apprentissage à partir de l'expérience réelle est frappant parce qu'il ne nécessite aucune connaissance préalable de la dynamique de l'environnement, tout en permettant d'atteindre un comportement optimal. Nous aborderons des méthodes de Monte Carlo intuitivement simples mais puissantes, ainsi que des méthodes d'apprentissage par différence temporelle, y compris l'apprentissage Q. A la fin de ce cours, vous serez capable de : - Comprendre l'apprentissage par différence temporelle et Monte Carlo comme deux stratégies pour estimer les fonctions de valeur à partir de l'expérience échantillonnée - Comprendre l'importance de l'exploration, lorsque l'on utilise l'expérience échantillonnée plutôt que les balayages de programmation dynamique dans un modèle - Comprendre les liens entre Monte Carlo et la programmation dynamique et la TD.

Méthodes d'apprentissage par échantillonnage

Méthodes d'apprentissage par échantillonnage

Ce cours fait partie de Spécialisation "Apprentissage par renforcement"

Instructeurs : Martha White

37 864 déjà inscrits

Inclus avec

1,254 avis

Expérience recommandée

Compétences que vous acquerrez

- Catégorie : Algorithmes d'apprentissage automatique

- Catégorie : Algorithmes

- Catégorie : Simulations

- Catégorie : Intelligence artificielle et apprentissage automatique (IA/ML)

- Catégorie : Échantillonnage (statistiques)

- Catégorie : Distribution de probabilité

- Catégorie : Apprentissage automatique

- Catégorie : Apprentissage par renforcement

- Section Compétences masquée. Affichage de 6 compétence(s) sur 8.

Détails à connaître

Ajouter à votre profil LinkedIn

5 devoirs

Découvrez comment les employés des entreprises prestigieuses maîtrisent des compétences recherchées

Élaborez votre expertise du sujet

- Apprenez de nouveaux concepts auprès d'experts du secteur

- Acquérez une compréhension de base d'un sujet ou d'un outil

- Développez des compétences professionnelles avec des projets pratiques

- Obtenez un certificat professionnel partageable

Il y a 5 modules dans ce cours

Bienvenue au deuxième cours de la spécialisation en apprentissage par renforcement : Méthodes d'apprentissage basées sur des échantillons, offert par l'Université de l'Alberta, Onlea et Coursera. Dans ce module pré-cours, vous serez présenté à vos instructeurs et aurez un aperçu de ce que le cours vous réserve. N'oubliez pas de vous présenter à vos camarades de classe dans la section "Meet and Greet" !

Inclus

2 vidéos2 lectures1 sujet de discussion

Cette semaine, vous apprendrez à estimer les fonctions de valeur et les politiques optimales, en utilisant uniquement l'expérience échantillonnée de l'environnement. Ce module représente notre première étape vers des méthodes d'apprentissage incrémental qui apprennent à partir de l'interaction de l'agent avec le monde, plutôt qu'à partir d'un modèle du monde. Vous découvrirez les méthodes de prédiction et de contrôle avec et sans politique, en utilisant les méthodes de Monte Carlo, c'est-à-dire des méthodes qui utilisent des retours échantillonnés. Vous serez également réintroduit dans le problème de l'exploration, mais plus généralement en RL, au-delà des bandits.

Inclus

11 vidéos3 lectures1 devoir1 devoir de programmation1 sujet de discussion

Cette semaine, vous découvrirez l'un des concepts les plus fondamentaux de l'apprentissage par renforcement : l'apprentissage par différence temporelle (TD). L'apprentissage par différence temporelle combine certaines caractéristiques des méthodes de Monte Carlo et de programmation dynamique (PD). Les méthodes TD sont similaires aux méthodes Monte Carlo en ce qu'elles peuvent apprendre de l'interaction de l'agent avec le monde, et ne nécessitent pas la connaissance du modèle. Les méthodes de TD sont similaires aux méthodes de DP dans la mesure où elles s'amorcent et peuvent donc apprendre en ligne, sans attendre la fin d'un épisode. Vous verrez comment la méthode TD peut apprendre plus efficacement que la méthode Monte Carlo, grâce au bootstrap. Pour ce module, nous nous concentrons d'abord sur la TD pour la prédiction, et nous aborderons la TD pour le contrôle dans le module suivant. Cette semaine, vous mettrez en œuvre la TD pour estimer la fonction de valeur pour une politique fixe, dans un domaine simulé.

Inclus

6 vidéos2 lectures1 devoir1 devoir de programmation1 sujet de discussion

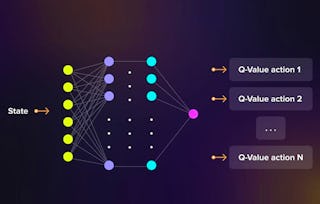

Cette semaine, vous apprendrez à utiliser l'apprentissage par différence temporelle pour le contrôle, en tant que stratégie d'itération de politique généralisée. Vous verrez trois algorithmes différents basés sur le bootstrapping et les équations de Bellman pour le contrôle : Sarsa, Q-learning et Expected Sarsa. Vous verrez certaines des différences entre les méthodes pour le contrôle avec et sans politique, et que Expected Sarsa est un algorithme unifié pour les deux. Vous mettrez en œuvre Expected Sarsa et Q-learning, sur Cliff World.

Inclus

9 vidéos3 lectures1 devoir1 devoir de programmation1 sujet de discussion

Jusqu'à présent, vous pouviez penser que l'apprentissage avec et sans modèle constituait deux stratégies distinctes et, d'une certaine manière, concurrentes : la planification avec la programmation dynamique et l'apprentissage basé sur l'échantillonnage via les méthodes de TD. Cette semaine, nous unifions ces deux stratégies avec l'architecture Dyna. Vous apprendrez à estimer le modèle à partir des données, puis à utiliser ce modèle pour générer une expérience hypothétique (un peu comme dans un rêve) afin d'améliorer considérablement l'efficacité de l'échantillonnage par rapport aux méthodes basées sur l'échantillonnage telles que l'apprentissage Q. En outre, vous apprendrez à concevoir des systèmes d'apprentissage robustes aux modèles imprécis.

Inclus

11 vidéos4 lectures2 devoirs1 devoir de programmation1 sujet de discussion

Obtenez un certificat professionnel

Ajoutez ce titre à votre profil LinkedIn, à votre curriculum vitae ou à votre CV. Partagez-le sur les médias sociaux et dans votre évaluation des performances.

Instructeurs

En savoir plus sur Apprentissage automatique

Statut : Prévisualisation

Statut : PrévisualisationColumbia University

Statut : Prévisualisation

Statut : PrévisualisationNortheastern University

Statut : Prévisualisation

Statut : PrévisualisationNortheastern University

Statut : Prévisualisation

Statut : PrévisualisationSimplilearn

Pour quelles raisons les étudiants sur Coursera nous choisissent-ils pour leur carrière ?

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Avis des étudiants

- 5 stars

82,31 %

- 4 stars

13,22 %

- 3 stars

2,78 %

- 2 stars

0,63 %

- 1 star

1,03 %

Affichage de 3 sur 1254

Révisé le 27 févr. 2020

Itwasgoodinsubstane but there is plenty of issues with the automated grader. you spend most time dealing with the letter not on actual learning of the matter.

Révisé le 13 mars 2022

The videos are very clear and do a good job explaining the material from the textbook. The assignments are relevant and just right in terms of length and difficulty.

Révisé le 14 févr. 2021

Excellent course that naturally extends the first specialization course. The application examples in programming are very good and I loved how RL gets closer and closer to how a living being thinks.

Ouvrez de nouvelles portes avec Coursera Plus

Accès illimité à 10,000+ cours de niveau international, projets pratiques et programmes de certification prêts à l'emploi - tous inclus dans votre abonnement.

Faites progresser votre carrière avec un diplôme en ligne

Obtenez un diplôme auprès d’universités de renommée mondiale - 100 % en ligne

Rejoignez plus de 3 400 entreprises mondiales qui ont choisi Coursera pour les affaires

Améliorez les compétences de vos employés pour exceller dans l’économie numérique

Foire Aux Questions

Pour accéder aux supports de cours, aux devoirs et pour obtenir un certificat, vous devez acheter l'expérience de certificat lorsque vous vous inscrivez à un cours. Vous pouvez essayer un essai gratuit ou demander une aide financière. Le cours peut proposer l'option "Cours complet, pas de certificat". Cette option vous permet de consulter tous les supports de cours, de soumettre les évaluations requises et d'obtenir une note finale. Cela signifie également que vous ne pourrez pas acheter un certificat d'expérience.

Lorsque vous vous inscrivez au cours, vous avez accès à tous les cours de la spécialisation et vous obtenez un certificat lorsque vous terminez le travail. Votre certificat électronique sera ajouté à votre page Réalisations - de là, vous pouvez imprimer votre certificat ou l'ajouter à votre profil LinkedIn.

Oui, pour certains programmes de formation, vous pouvez demander une aide financière ou une bourse si vous n'avez pas les moyens de payer les frais d'inscription. Si une aide financière ou une bourse est disponible pour votre programme de formation, vous trouverez un lien de demande sur la page de description.

Plus de questions

Aide financière disponible,